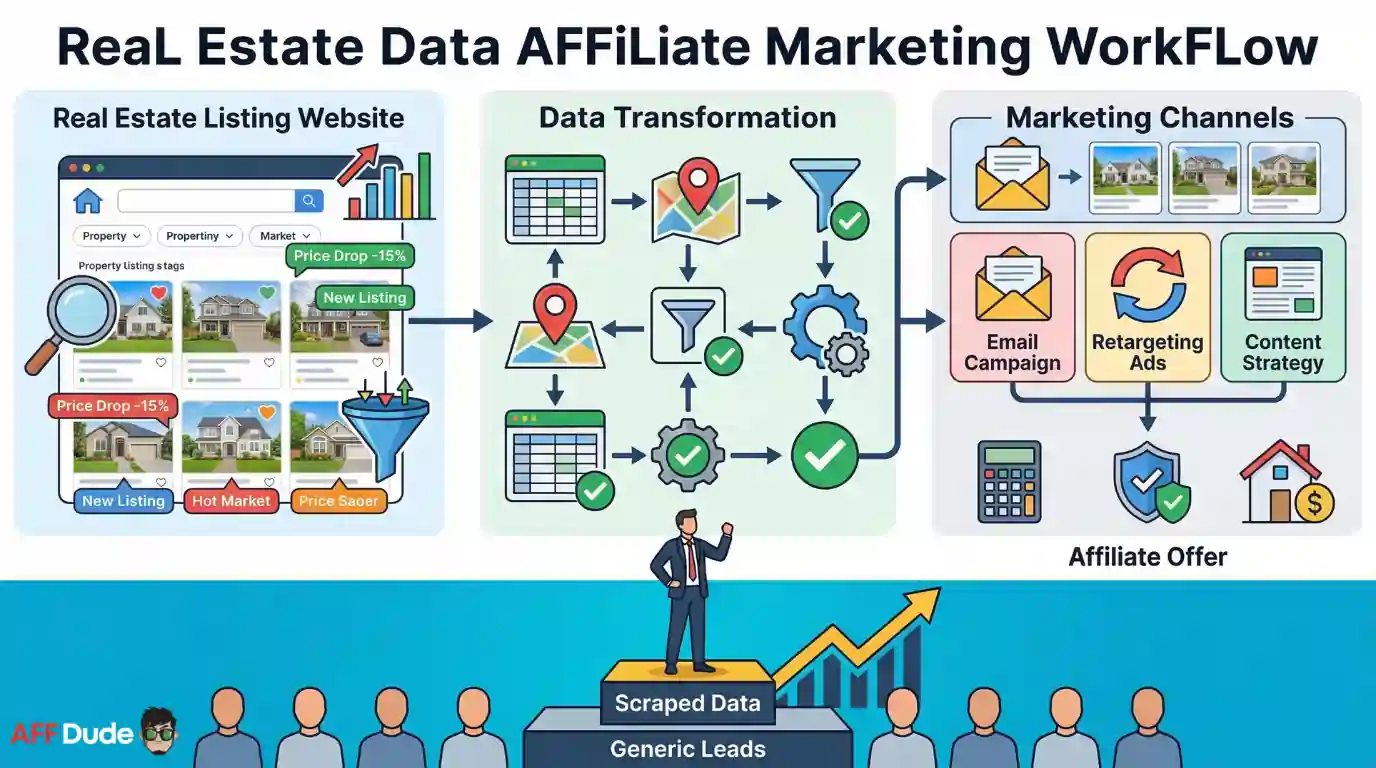

Real estate is a goldmine for affiliate marketers. Every listing carries buyer intent, location data and contact details that feed affiliate lead generation campaigns.

But scraping property portals at scale is not easy. Sites like Zillow, Realtor and Redfin block bots fast.

You need city-level proxy coverage, stable sessions and long-running crawlers to pull it off. That is exactly where Decodo steps in.

If you sell mortgage tools, home insurance or moving services as an affiliate, real estate listing data is your best friend.

In this guide, you will learn how to scrape property listings without getting blocked and turn raw data into high-converting leads.

Why Real Estate Data Matters for Affiliate Marketers

Every property listing holds signals. Price drops show motivated sellers. New listings reveal active buyers. Location patterns expose hot markets.

Affiliate marketers use real estate scraping tools to collect listing data from multiple portals. You then match listings with relevant affiliate offers like mortgage calculators, insurance quotes or home valuation tools.

A single scraped dataset from one city can power email campaigns, retargeting ads and content strategies for weeks. But you need clean, accurate and geo-specific data to make it work.

Raw listing data becomes your competitive advantage. Most affiliates rely on generic lead sources. You go straight to where buyer and seller intent lives.

What Makes Real Estate Sites Hard to Scrape

Property portals invest heavily in anti-bot detection systems. Zillow, Realtor.com and Redfin all use advanced fingerprinting, rate limiting and CAPTCHA walls.

Here is what you are up against:

A basic scraper with datacenter proxies will get flagged within minutes. You need residential IPs that mimic real user behaviour and hold sessions steady across pages.

How City-Level Proxy Coverage Changes Everything

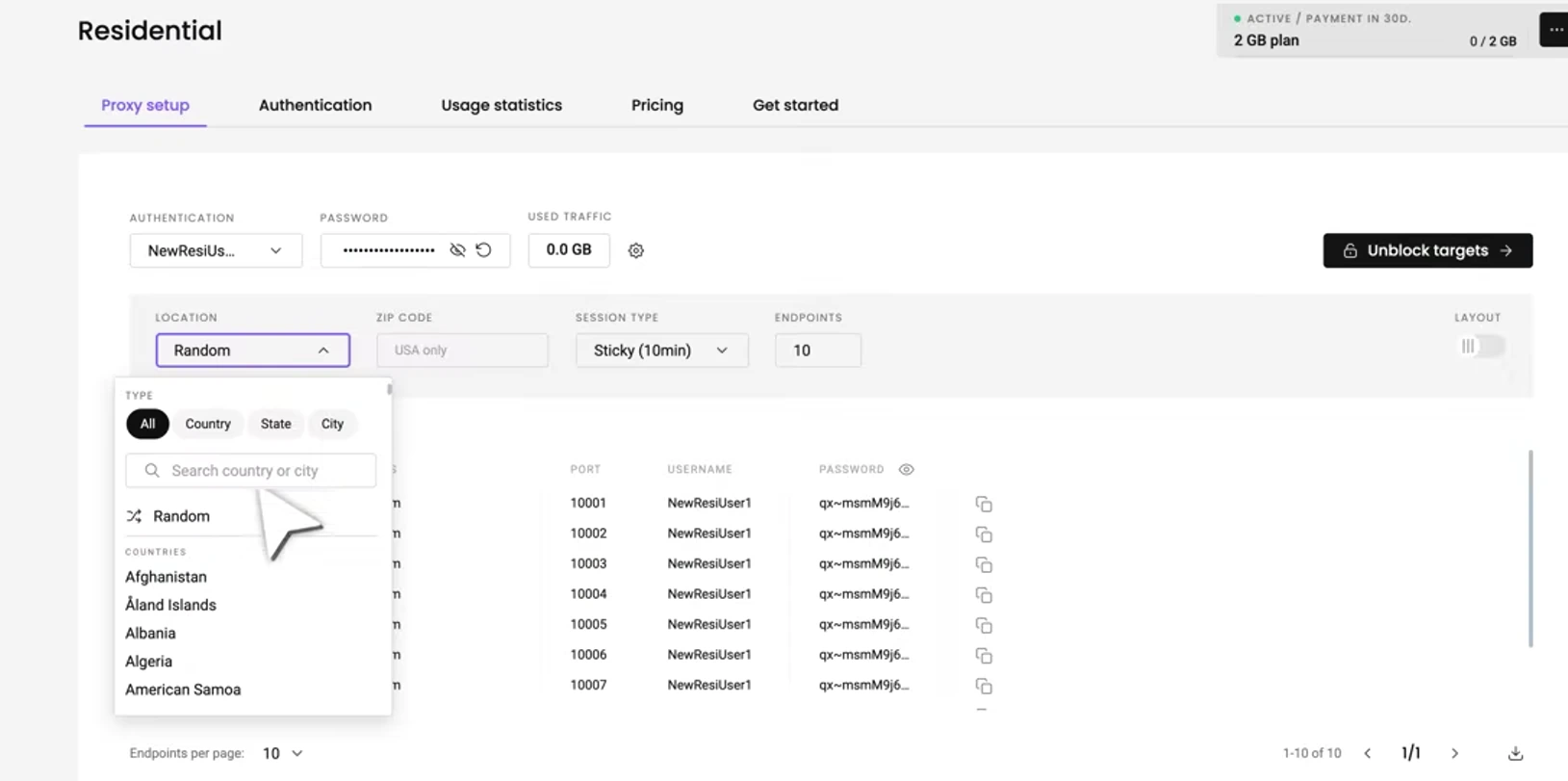

Scraping real estate data requires location accuracy. A listing in Miami shows different prices, agents and details compared to one in Chicago.

City-level proxy targeting lets you route requests through IPs in specific cities. You see exactly what a local buyer would see. No redirects. No geo-restricted content blocks.

Decodo offers residential proxy city targeting across 195+ locations worldwide. You can pick IPs from New York, Los Angeles, London, Mumbai or thousands of other cities.

| Feature | Datacenter Proxies | Decodo Residential Proxies |

|---|---|---|

| IP Pool Size | 50K–500K | 125M+ ethically sourced |

| City-Level Targeting | ❌ Limited | ✅ Thousands of cities |

| Detection Risk | 🔴 High | 🟢 Very Low |

| Session Stability | ❌ Drops frequently | ✅ Sticky sessions up to 24h |

| Success Rate | ~60–70% | 99.86% |

| Starting Price | $0.50/IP | $2/GB |

With Decodo, your scraper appears as a genuine local visitor in any target city. Property portals serve full listing data without triggering blocks.

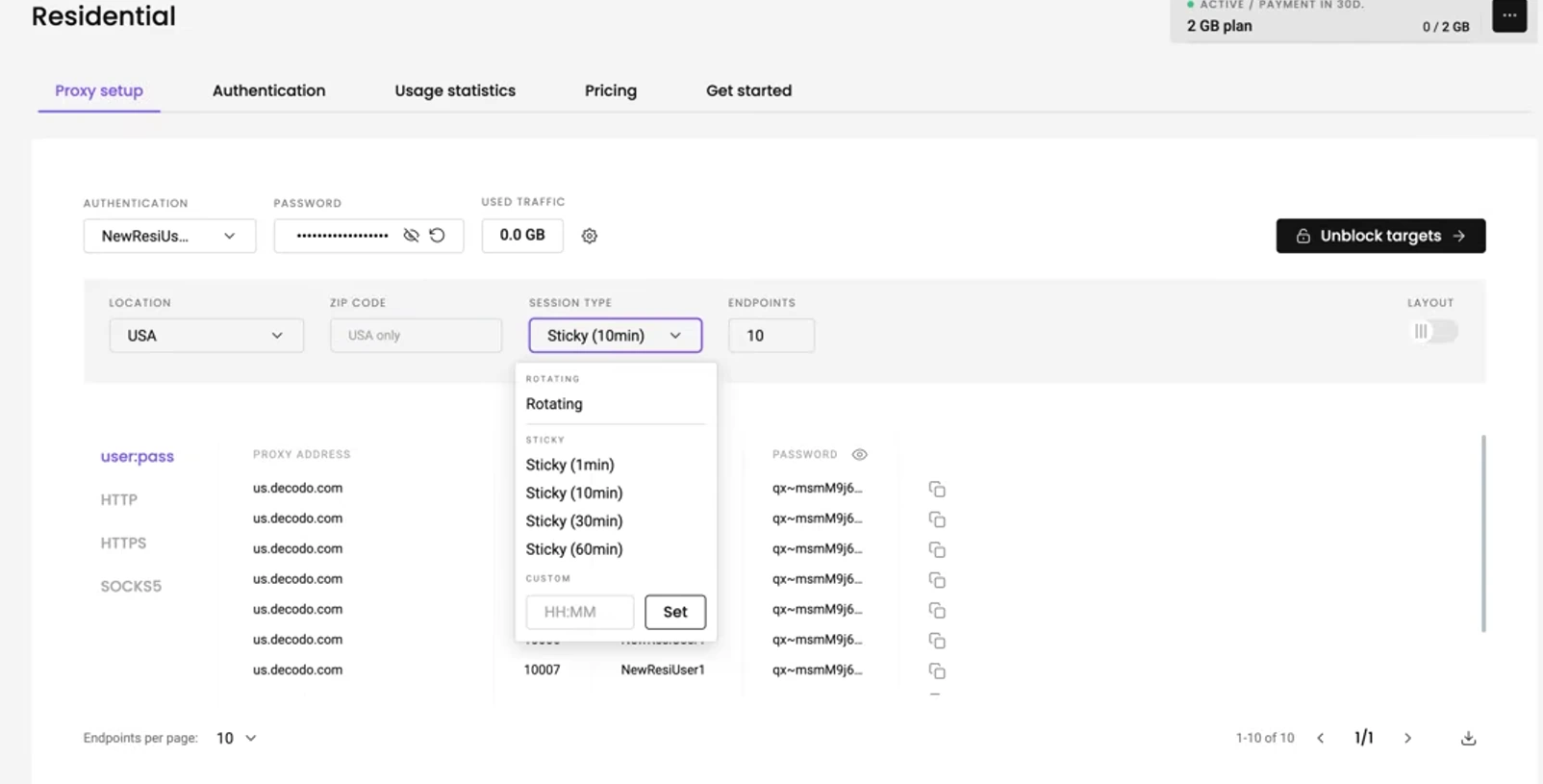

Setting Up Long-Running Crawlers With Stable Sessions

Real estate scraping is not a quick job. You need to paginate through hundreds of listings, follow individual property links and extract nested data.

Long-running web crawlers require sessions that stay alive for minutes or even hours. If your IP rotates mid-crawl, you lose session cookies and get flagged.

Decodo solves this with sticky proxy sessions that hold a single IP for a set duration. You can configure session length from 1 minute up to 24 hours ⏱️

Here is a simple Python setup using Decodo residential proxies:

import requests

proxy = "http://USERNAME:PASSWORD@gate.decodo.com:7000"

session = requests.Session()

session.proxies = {"http": proxy, "https": proxy}

url = "https://example-realestate.com/listings?city=miami&page=1"

response = session.get(url)

print(response.status_code)You keep a consistent IP throughout your crawl. Pages load as expected. Session cookies persist. Anti-bot systems see a normal browsing pattern.

Building Your Real Estate Scraping Pipeline 🛠️

A proper web scraping pipeline for lead generation follows a clear flow. Here is how to structure yours:

- Define target cities based on affiliate offer availability

- Set up Decodo proxies with city-level targeting for each location

- Configure sticky sessions to maintain crawl continuity

- Build scrapers using Python, Scrapy or Puppeteer for JavaScript-heavy sites

- Extract listing data including price, address, agent info and listing status

- Store data in a database or export to CSV for processing

- Match leads with affiliate offers based on location and buyer intent signals

Each city gets a dedicated proxy endpoint. Your crawler rotates through listings while Decodo handles IP management in the background.

What Data Points to Extract From Listings

Not all listing data is useful for affiliate marketing lead generation. Focus on fields that help you qualify and convert leads.

Pair these data points with affiliate products like home loan comparison tools, insurance quote generators or property management software. Location-specific data drives higher conversion rates because offers match user intent.

Why Decodo Is Built for Real Estate Scraping at Scale

Decodo (formerly Smartproxy) is purpose-built for large-scale web data collection. Here is why affiliate marketers choose it for real estate scraping:

Plans start at just $2/GB with a 3-day free trial available. No credit card needed to test. You also get a 14-day money-back guarantee on all paid plans.

Decodo integrates with Scrapy, Puppeteer, Selenium and popular automation platforms. Setup takes minutes using dashboard controls or API endpoints.

Over 135,000 clients already trust Decodo for web data collection. Industry leaders like TechRadar, PCMag and G2 have recognised it as a top proxy provider in 2025 and 2026.

Scaling Across Multiple Cities Without Getting Blocked

Once your scraper works in one city, scaling is straightforward. Assign different Decodo proxy endpoints to each target market.

Run parallel crawlers for Miami, Dallas, Phoenix, Atlanta and Seattle simultaneously. Each crawler uses location-specific residential IPs and maintains its own sticky session.

Decodo's unlimited concurrent sessions mean you never hit a connection cap. Your scraping speed stays consistent even when running dozens of city-targeted crawlers at once.

Monitor bandwidth usage and session health directly from Decodo's dashboard. Adjust session duration, swap targeting parameters and track success rates in real time.

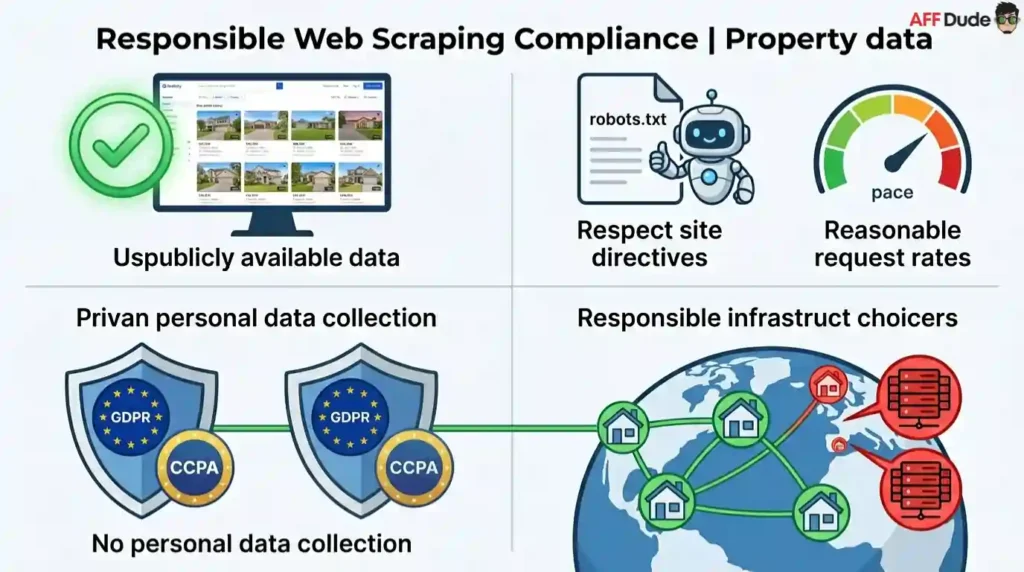

Staying Compliant While Scraping Property Data

Always scrape responsibly. Only collect publicly available real estate data that is visible to any site visitor.

Respect robots.txt directives and keep request rates reasonable. Avoid scraping personal data that falls under privacy regulations like GDPR or CCPA.

Decodo's ethically sourced IP network aligns with responsible data collection practices. Using residential proxies reduces server load on target sites compared to aggressive datacenter scraping.

Focus on public listing information. Prices, addresses and property details posted on open portals are fair game. Personal seller data behind logins is off limits.

From Raw Listings to Affiliate Revenue

Scraping real estate listings is only step one. Real value comes from turning that data into qualified leads.

Build location-based landing pages using scraped market data. Create content around local property market trends that attracts organic traffic. Feed listing alerts into email sequences tied to affiliate offers.

Segment your scraped data by city, price range and property type. Run targeted campaigns for each segment with matching affiliate products.

👉Try Decodo free for 3 days and start building your real estate scraping pipeline today.

A well-structured pipeline using Decodo proxies, city-level targeting and stable sessions turns publicly available listing data into a consistent affiliate income stream.

Recommended Articles